Dataset Division,Model fit,Model Indicators, Feature Engineering in Machine Learning

Division of data sets:

- Training set – Learn the sample data set and build a model by matching some parameters, mainly for training the model. An analogy to the problem solving before the postgraduate study.

- Validation set – For the learned model, adjust the parameters of the model, such as selecting the number of hidden units in the neural network. The validation set is also used to determine the network structure or parameters that control the complexity of the model. Analog test before the exam.

- Test set – Tests the resolving power of a trained model. Analogy. This is really a test for life.

Model fit:

- Underfitting: The model does not capture the data features well and does not fit the data well. The general nature of the training samples has not been well learned. Analogy, if you don’t do a book, you will feel that you will have anything. If you go to the examination room, you will know that you will not.

- Overfitting: The model learns the training samples as “very good”. It may take some of the characteristics of the training samples as the general nature of all potential samples, resulting in a decrease in generalization ability. Analogy, all the questions after the class are done right, and the super-class questions are also considered to be the exam questions, and they will not be on the examination room.

In general, under-fitting and over-fitting can be used in one sentence, the under-fitting is: “You are too naive!“, over-fitting is: “You think too much!“.

Common model indicators:

- Precision – the number of correct pieces of information extracted / the number of pieces of information extracted

- Recall – the number of correct pieces of information extracted / the number of pieces of information in the sample

- F value – precision * recall * 2 / (precision + recall ) (F value is the harmonic mean of the correct rate and recall rate)

Here is an example:

A pond has 1,400 squid, 300 shrimp, and 300 turtles. Now for the purpose of catching squid. Sprinkled a net and caught 700 squid, 200 shrimps, and 100 turtles. Then these indicators are as follows:

Precision = 700 / (700 + 200 + 100) = 70%

Recall = 700 / 1400 = 50%

F value = 70% * 50% * 2 / (70% + 50%) = 58.3 %

Model:

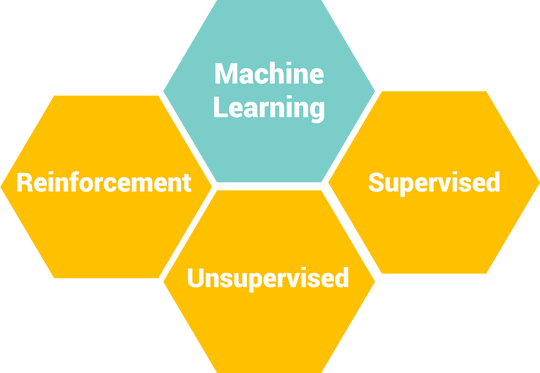

- Classification problem – To put it bluntly, it is to divide some unknown categories of data into the currently known categories. For example, based on some of your information, judge whether you are rich and handsome, or poor. The three indicators that judge the classification effect are the three indicators described above: correct rate, recall rate, and F value.

- Regression Problem – A supervised learning algorithm for predicting and modeling numerical continuous random variables. Regression often determines the accuracy of the model by calculating the error (Error).

- Clustering Problem – Clustering is an unsupervised learning task that finds the natural population (ie cluster) of the observed samples based on the internal structure of the data. The criteria for clustering problems are generally based on distance: Intra-cluster Distance and Inter-cluster Distance. The smaller the distance within the cluster, the better, that is, the more similar the elements in the cluster are, the better the distance between the clusters is, and the better the distance between the clusters, that is, the more different the elements between clusters (different clusters). In general, measuring the clustering problem gives a formula that combines the distance within the cluster and the distance between the clusters.

Some small things in feature engineering:

- Feature Selection – Also called Feature Subset Selection (FSS). It refers to the selection of N features from the existing M features to optimize the specific indicators of the system. It is the process of selecting some of the most effective features from the original features to reduce the dimensions of the data set, and is a function to improve the performance of the algorithm. The important means is also the key data preprocessing step in pattern recognition.

- Feature Extraction – Feature extraction is a concept in computer vision and image processing. It refers to the use of a computer to extract image information and determine whether the points of each image belong to an image feature. The result of feature extraction is to divide the points on the image into different subsets, which tend to belong to isolated points, continuous curves or continuous regions.

Next:What are Machine Learning Prerequisites and Machine Learning Terminologies for Beginners?

1 Comment

Supervised learning,Unsupervised learning and Reinforcement learning in Machinelearning - projectsflix · January 5, 2021 at 6:46 pm

[…] Next:Dataset Division,Model fit,Model Indicators, Feature Engineering in Machine Learning […]